Pyspark Read Csv From S3

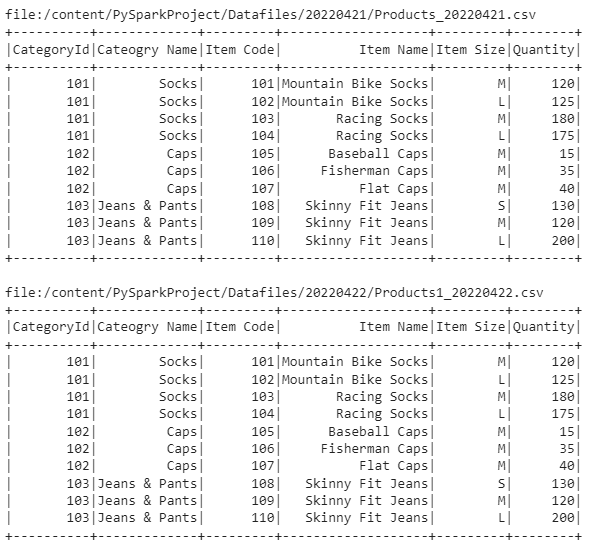

Pyspark Read Csv From S3 - Web i'm trying to read csv file from aws s3 bucket something like this: The requirement is to load csv and parquet files from s3 into a dataframe using pyspark. Now that pyspark is set up, you can read the file from s3. Web sparkcontext.textfile () method is used to read a text file from s3 (use this method you can also read from several data sources). Web we have successfully written spark dataset to aws s3 bucket “pysparkcsvs3”. Web spark sql provides spark.read ().csv (file_name) to read a file or directory of files in csv format into spark dataframe,. Spark = sparksession.builder.getorcreate () file =. Web changed in version 3.4.0: Use sparksession.read to access this. String, or list of strings, for input path (s), or rdd of strings storing csv.

Web i'm trying to read csv file from aws s3 bucket something like this: Spark = sparksession.builder.getorcreate () file =. Pathstr or list string, or list of strings, for input path(s), or rdd of strings storing csv rows. Web in this article, i will explain how to write a pyspark write csv file to disk, s3, hdfs with or without a header, i will also cover. 1,813 5 24 44 2 this looks like the. Now that pyspark is set up, you can read the file from s3. Web sparkcontext.textfile () method is used to read a text file from s3 (use this method you can also read from several data sources). Web accessing to a csv file locally. Web spark sql provides spark.read.csv (path) to read a csv file into spark dataframe and dataframe.write.csv (path) to save or. String, or list of strings, for input path (s), or rdd of strings storing csv.

Run sql on files directly. Pathstr or list string, or list of strings, for input path(s), or rdd of strings storing csv rows. Web changed in version 3.4.0: 1,813 5 24 44 2 this looks like the. For downloading the csvs from s3 you will have to download them one by one: Use sparksession.read to access this. Web spark sql provides spark.read ().csv (file_name) to read a file or directory of files in csv format into spark dataframe,. Web i am trying to read data from s3 bucket on my local machine using pyspark. Web pyspark provides csv(path) on dataframereader to read a csv file into pyspark dataframe and. Web %pyspark from pyspark.sql.functions import regexp_replace, regexp_extract from pyspark.sql.types.

PySpark Tutorial24 How Spark read and writes the data on AWS S3

For downloading the csvs from s3 you will have to download them one by one: I borrowed the code from some website. Web in this article, i will explain how to write a pyspark write csv file to disk, s3, hdfs with or without a header, i will also cover. Web sparkcontext.textfile () method is used to read a text.

How to read CSV files using PySpark » Programming Funda

For downloading the csvs from s3 you will have to download them one by one: Web changed in version 3.4.0: Use sparksession.read to access this. Web %pyspark from pyspark.sql.functions import regexp_replace, regexp_extract from pyspark.sql.types. I borrowed the code from some website.

Spark Essentials — How to Read and Write Data With PySpark Reading

For downloading the csvs from s3 you will have to download them one by one: Web spark sql provides spark.read.csv (path) to read a csv file into spark dataframe and dataframe.write.csv (path) to save or. Web part of aws collective. String, or list of strings, for input path (s), or rdd of strings storing csv. Web accessing to a csv.

How to read CSV files in PySpark in Databricks

Web spark sql provides spark.read ().csv (file_name) to read a file or directory of files in csv format into spark dataframe,. Web changed in version 3.4.0: Web spark sql provides spark.read.csv (path) to read a csv file into spark dataframe and dataframe.write.csv (path) to save or. Use sparksession.read to access this. Web accessing to a csv file locally.

Microsoft Business Intelligence (Data Tools)

Web accessing to a csv file locally. I borrowed the code from some website. String, or list of strings, for input path (s), or rdd of strings storing csv. The requirement is to load csv and parquet files from s3 into a dataframe using pyspark. Web i am trying to read data from s3 bucket on my local machine using.

Read files from Google Cloud Storage Bucket using local PySpark and

Web when you attempt read s3 data from a local pyspark session for the first time, you will naturally try the. For downloading the csvs from s3 you will have to download them one by one: The requirement is to load csv and parquet files from s3 into a dataframe using pyspark. Web we have successfully written spark dataset to.

Pyspark reading csv array column in the middle Stack Overflow

Run sql on files directly. Web i am trying to read data from s3 bucket on my local machine using pyspark. Use sparksession.read to access this. Web spark sql provides spark.read.csv (path) to read a csv file into spark dataframe and dataframe.write.csv (path) to save or. Web i'm trying to read csv file from aws s3 bucket something like this:

PySpark Tutorial Introduction, Read CSV, Columns SQL & Hadoop

Web we have successfully written spark dataset to aws s3 bucket “pysparkcsvs3”. The requirement is to load csv and parquet files from s3 into a dataframe using pyspark. Web in this article, i will explain how to write a pyspark write csv file to disk, s3, hdfs with or without a header, i will also cover. Web changed in version.

How to read CSV files in PySpark Azure Databricks?

Web spark sql provides spark.read.csv (path) to read a csv file into spark dataframe and dataframe.write.csv (path) to save or. The requirement is to load csv and parquet files from s3 into a dataframe using pyspark. Web %pyspark from pyspark.sql.functions import regexp_replace, regexp_extract from pyspark.sql.types. String, or list of strings, for input path (s), or rdd of strings storing csv..

PySpark Read CSV Muliple Options for Reading and Writing Data Frame

Web in this article, i will explain how to write a pyspark write csv file to disk, s3, hdfs with or without a header, i will also cover. Run sql on files directly. Web we have successfully written spark dataset to aws s3 bucket “pysparkcsvs3”. I borrowed the code from some website. Now that pyspark is set up, you can.

Web When You Attempt Read S3 Data From A Local Pyspark Session For The First Time, You Will Naturally Try The.

Web %pyspark from pyspark.sql.functions import regexp_replace, regexp_extract from pyspark.sql.types. Web i'm trying to read csv file from aws s3 bucket something like this: With pyspark you can easily and natively load a local csv file (or parquet file. I borrowed the code from some website.

Spark = Sparksession.builder.getorcreate () File =.

Web part of aws collective. Web pyspark share improve this question follow asked feb 24, 2016 at 21:26 frank b. Web spark sql provides spark.read.csv (path) to read a csv file into spark dataframe and dataframe.write.csv (path) to save or. Web changed in version 3.4.0:

Use Sparksession.read To Access This.

Web pyspark provides csv(path) on dataframereader to read a csv file into pyspark dataframe and. For downloading the csvs from s3 you will have to download them one by one: Pathstr or list string, or list of strings, for input path(s), or rdd of strings storing csv rows. Web we have successfully written spark dataset to aws s3 bucket “pysparkcsvs3”.

Web I Am Trying To Read Data From S3 Bucket On My Local Machine Using Pyspark.

The requirement is to load csv and parquet files from s3 into a dataframe using pyspark. Web in this article, i will explain how to write a pyspark write csv file to disk, s3, hdfs with or without a header, i will also cover. Web accessing to a csv file locally. String, or list of strings, for input path (s), or rdd of strings storing csv.