Pyspark Read Text File

Pyspark Read Text File - F = open (details.txt,r) print (f.read ()) we are searching for the file in our storage and opening it.then we are reading it with the help of read () function. Df = spark.createdataframe( [ (a,), (b,), (c,)], schema=[alphabets]). Create rdd using sparkcontext.textfile() using textfile() method we can read a text (.txt) file into rdd. (added in spark 1.2) for example, if you have the following files… Web 1 answer sorted by: Web write a dataframe into a text file and read it back. Parameters namestr directory to the input data files… Importing necessary libraries first, we need to import the necessary pyspark libraries. Web create a sparkdataframe from a text file. Web spark sql provides spark.read.text ('file_path') to read from a single text file or a directory of files as spark dataframe.

Web a text file for reading and processing. Read multiple text files into a single rdd; The spark.read () is a method used to read data from various data sources such as csv, json, parquet, avro,. Web 1 answer sorted by: Loads text files and returns a sparkdataframe whose schema starts with a string column named value, and followed by partitioned columns if there are any. Text files, due to its freedom, can contain data in a very convoluted fashion, or might have. Web an array of dictionary like data inside json file, which will throw exception when read into pyspark. Web pyspark supports reading a csv file with a pipe, comma, tab, space, or any other delimiter/separator files. 0 if you really want to do this you can write a new data reader that can handle this format natively. F = open (details.txt,r) print (f.read ()) we are searching for the file in our storage and opening it.then we are reading it with the help of read () function.

Parameters namestr directory to the input data files… Basically you'd create a new data source that new how to read files. Web sparkcontext.textfile(name, minpartitions=none, use_unicode=true) [source] ¶. Read all text files matching a pattern to single rdd; # write a dataframe into a text file. Web create a sparkdataframe from a text file. Read options the following options can be used when reading from log text files… Web the text file i created for this tutorial is called details.txt and it looks something like this: (added in spark 1.2) for example, if you have the following files… Read multiple text files into a single rdd;

Read Parquet File In Pyspark Dataframe news room

Read multiple text files into a single rdd; Web when i read it in, and sort into 3 distinct columns, i return this (perfect): # write a dataframe into a text file. Web in this article let’s see some examples with both of these methods using scala and pyspark languages. Bool = true) → pyspark.rdd.rdd [ tuple [ str, str]].

9. read json file in pyspark read nested json file in pyspark read

Importing necessary libraries first, we need to import the necessary pyspark libraries. 0 if you really want to do this you can write a new data reader that can handle this format natively. First, create an rdd by reading a text file. Web pyspark supports reading a csv file with a pipe, comma, tab, space, or any other delimiter/separator files..

How To Read An Orc File Using Pyspark Format Spark Performace Tuning

Web spark sql provides spark.read.text ('file_path') to read from a single text file or a directory of files as spark dataframe. Read all text files matching a pattern to single rdd; Web write a dataframe into a text file and read it back. Web when i read it in, and sort into 3 distinct columns, i return this (perfect): Web.

Handle Json File Format Using Pyspark Riset

Web sparkcontext.textfile(name, minpartitions=none, use_unicode=true) [source] ¶. To read a parquet file. Web from pyspark import sparkcontext, sparkconf conf = sparkconf ().setappname (myfirstapp).setmaster (local) sc = sparkcontext (conf=conf) textfile = sc.textfile. Pyspark out of the box supports reading files in csv, json, and many more file formats into pyspark dataframe. Text files, due to its freedom, can contain data in a.

How to read CSV files using PySpark » Programming Funda

Web a text file for reading and processing. Df = spark.createdataframe( [ (a,), (b,), (c,)], schema=[alphabets]). Parameters namestr directory to the input data files… Web to make it simple for this pyspark rdd tutorial we are using files from the local system or loading it from the python list to create rdd. To read a parquet file.

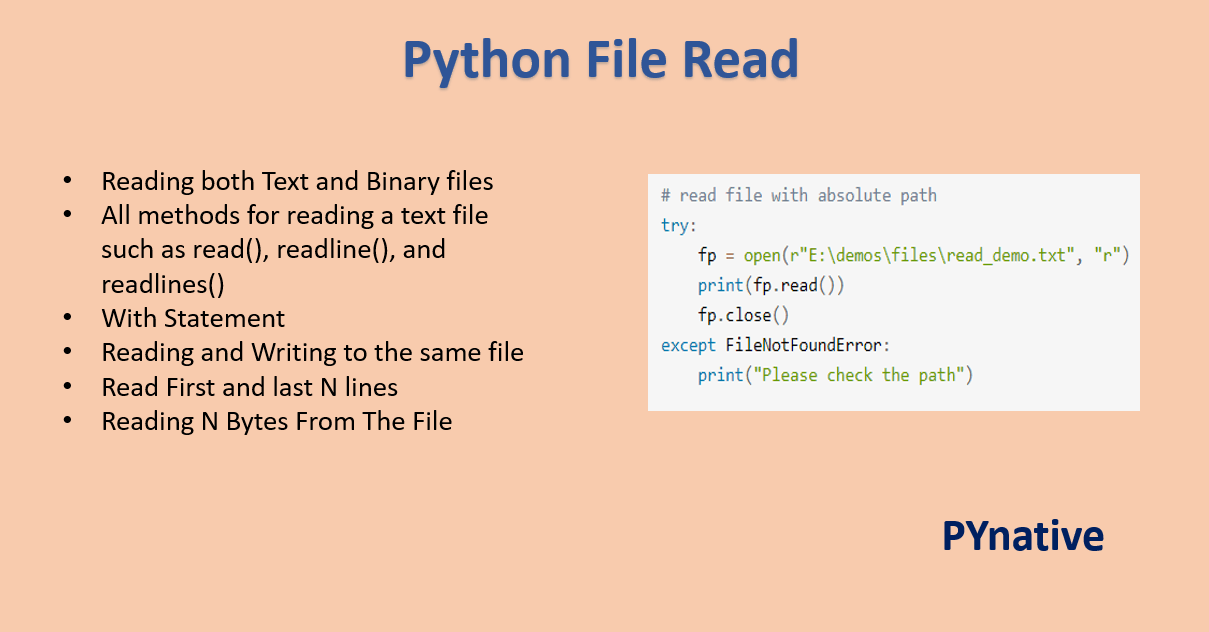

Reading Files in Python PYnative

F = open (details.txt,r) print (f.read ()) we are searching for the file in our storage and opening it.then we are reading it with the help of read () function. Web when i read it in, and sort into 3 distinct columns, i return this (perfect): First, create an rdd by reading a text file. Web sparkcontext.textfile(name, minpartitions=none, use_unicode=true) [source].

PySpark Read and Write Parquet File Spark by {Examples}

Web to make it simple for this pyspark rdd tutorial we are using files from the local system or loading it from the python list to create rdd. # write a dataframe into a text file. The spark.read () is a method used to read data from various data sources such as csv, json, parquet, avro,. From pyspark.sql import sparksession.

Spark Essentials — How to Read and Write Data With PySpark Reading

From pyspark.sql import sparksession from pyspark… Web when i read it in, and sort into 3 distinct columns, i return this (perfect): This article shows you how to read apache common log files. >>> >>> import tempfile >>> with tempfile.temporarydirectory() as d: Text files, due to its freedom, can contain data in a very convoluted fashion, or might have.

PySpark Read JSON file into DataFrame Cooding Dessign

Web to make it simple for this pyspark rdd tutorial we are using files from the local system or loading it from the python list to create rdd. Web pyspark supports reading a csv file with a pipe, comma, tab, space, or any other delimiter/separator files. F = open (details.txt,r) print (f.read ()) we are searching for the file in.

PySpark Tutorial 10 PySpark Read Text File PySpark with Python YouTube

Web 1 answer sorted by: Web when i read it in, and sort into 3 distinct columns, i return this (perfect): Web to make it simple for this pyspark rdd tutorial we are using files from the local system or loading it from the python list to create rdd. Basically you'd create a new data source that new how to.

Read All Text Files Matching A Pattern To Single Rdd;

0 if you really want to do this you can write a new data reader that can handle this format natively. Web 1 answer sorted by: This article shows you how to read apache common log files. Web apache spark april 2, 2023 spread the love spark provides several read options that help you to read files.

Read All Text Files From A Directory Into A Single Rdd;

Parameters namestr directory to the input data files… >>> >>> import tempfile >>> with tempfile.temporarydirectory() as d: Web an array of dictionary like data inside json file, which will throw exception when read into pyspark. To read a parquet file.

To Read This File, Follow The Code Below.

Web when i read it in, and sort into 3 distinct columns, i return this (perfect): Bool = true) → pyspark.rdd.rdd [ tuple [ str, str]] [source] ¶. Web from pyspark import sparkcontext, sparkconf conf = sparkconf ().setappname (myfirstapp).setmaster (local) sc = sparkcontext (conf=conf) textfile = sc.textfile. Web write a dataframe into a text file and read it back.

Web A Text File For Reading And Processing.

The pyspark.sql module is used for working with structured data. Web sparkcontext.textfile(name, minpartitions=none, use_unicode=true) [source] ¶. The spark.read () is a method used to read data from various data sources such as csv, json, parquet, avro,. Web to make it simple for this pyspark rdd tutorial we are using files from the local system or loading it from the python list to create rdd.